Amazon Web Services (AWS) is generally secure by default, but can be misconfigured and the initial setup lacks enforcement of some best practices. I gave a talk at the AWS Chicago meetup group recently where I mentioned some of the available tools for checking these things. This post provides a survey of the existing tools available to help you discover potential security improvements with AWS accounts.

An example of best practices these tools check for is if MFA is on the root account. An example of potential security issues is if a Security Group is open to 0.0.0.0/0 (ie. it is open to network traffic from anywhere in the world) or an S3 bucket is world readable. In both of these cases there can be legitimate and safe reasons for doing this, so keep in mind that for your environment some of these issues identified could be false positives. These tools are just pointing out potential areas of concern.

There are three ways to gather infomation about an AWS account:

- Make a bunch of AWS API calls to understand how an account currently exists.

- Monitor CloudTrail logs and alert when potentially concerning changes are made.

- Use AWS Config logs to understand how an account currently exists.

The projects discussed here are all based on making a bunch of API calls. There isn’t much out there for free tools that use the CloudTrail method, although Airbnb’s StreamAlert is doing some work there (example), and there isn’t anything that uses the AWS Config method.

To use these tools, you should run them in an EC2 instance that has an IAM role associated with it that provides the Security Audit permissions. Alternatively, you can configure your AWS CLI tools to use an AWS key, and the boto3 library that many of these tools use will leverage that API key. Using AWS keys is not recommended, but I mention it because you can use the key provided for level 6 of the flAWS challenge, which has these privileges and will allow you to run these tools against that account. The flAWS account has a couple of issues that will be identified. The AWS services mentioned here can not be used via this method.

The available tools are:

AWS Trusted Advisor

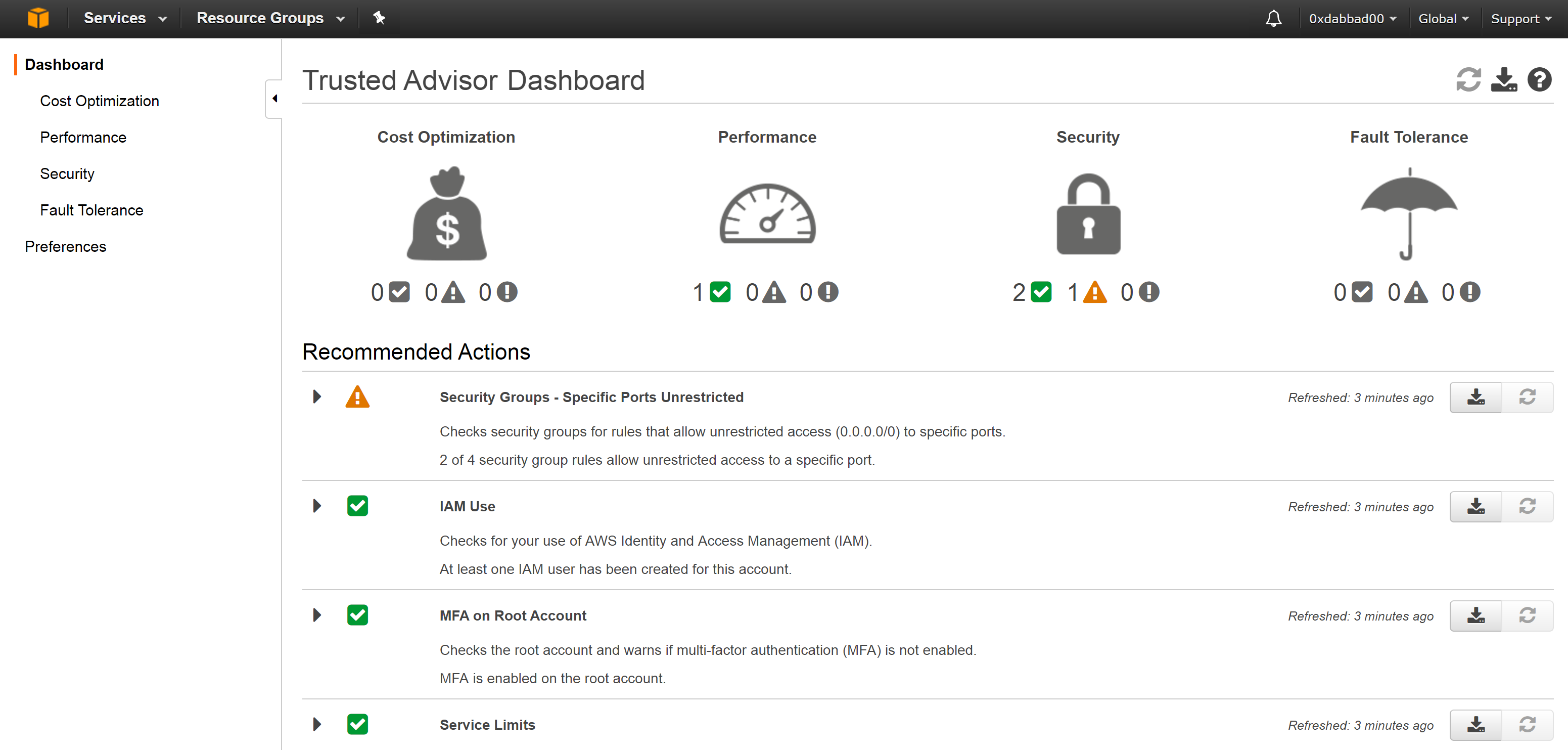

The AWS Trusted Advisor service was released in July, 2014 and comes free with your AWS account and provides not only security checks, but also cost optimization, performance, and fault tolerance checks. Simply visit console.aws.amazon.com/trustedadvisor/.

3 checks come with all accounts, and if you pay $100/mo or more for a Business support plan from AWS (link), you get 15 more checks. The initial 3 security checks are:

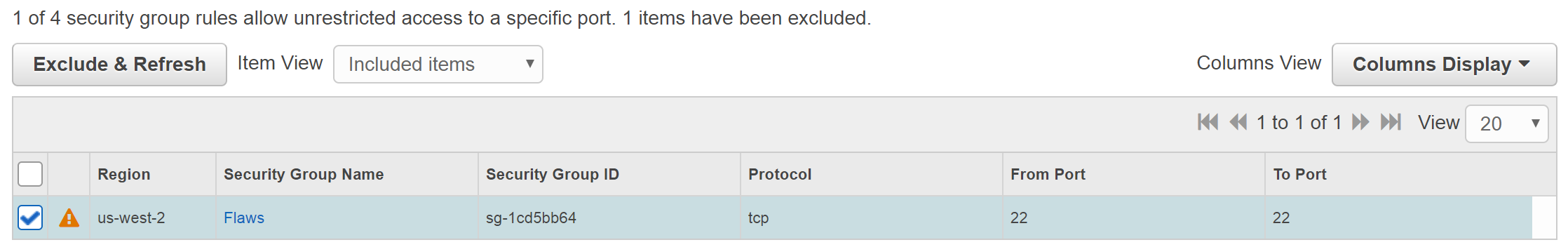

- Are any Security Groups open to ingress 0.0.0.0/0 for certain ports? Ports like 80 (HTTP) and 443 (HTTPS) are ok, but it goes red if 3306 (MySQL) and some other specific ports are open. If 22 (SSH) or any other port is open, it goes yellow.

- Have you created at least one other IAM user, in hopes that you aren’t solely using the root account?

- Does the root account have MFA?

Once you pay for support, the other security checks are:

- Checks S3 buckets have no loose permissions.

- Checks a password policy is set.

- Various Security Group checks, such as specific ones to ensure RDS databases are not exposed.

- Checks Route53, for each MX record, ensures a TXT record contains a corresponding SPF value to deny email spoofing.

- Checks Cloudtrail is on.

- Checks ELB listeners use good SSL policies.

- Checks if SSL certs will expire soon.

- Checks if IAM keys have been rotated in the past 90 days.

- Checks for exposed access keys.

The last check for “Exposed Access Keys” is interesting, as it’s looking for issues outside of your account. According to Amazon, this “checks popular code repositories for access keys that have been exposed to the public and for irregular Amazon Elastic Compute Cloud (Amazon EC2) usage that could be the result of a compromised access key.” This basically means they are scanning Github for your keys, and that they are looking for large numbers of EC2’s suddenly spinning up in all regions that are optimized for bitcoin mining.

AWS Trusted Advisor is oddly slow. It takes a while for it to initially show anything, and then if you want to hide things, such as a security group that is open to 0.0.0.0/0, then it will take about 5 minutes to refresh.

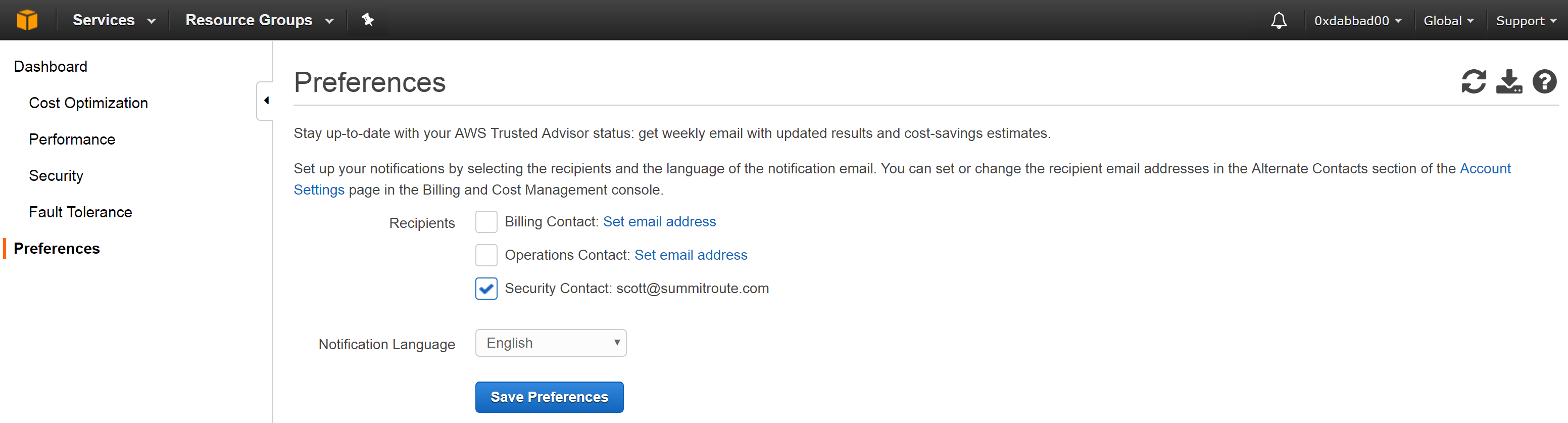

If you don’t want to check this page, you’ll need to set who to alert in the preferences at console.aws.amazon.com/trustedadvisor/home#/preferences.

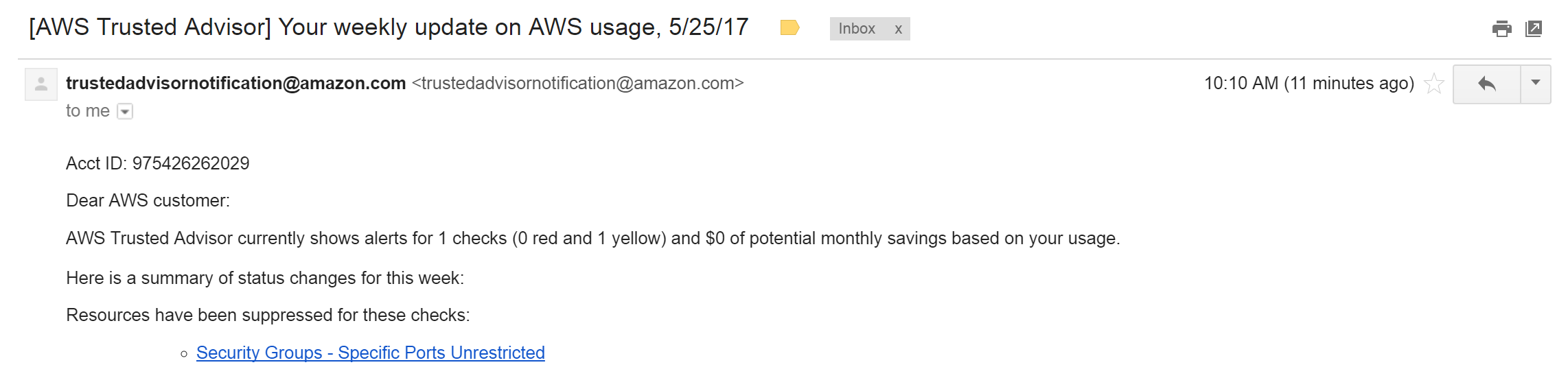

This will result in weekly emails like this, that aren’t very useful.

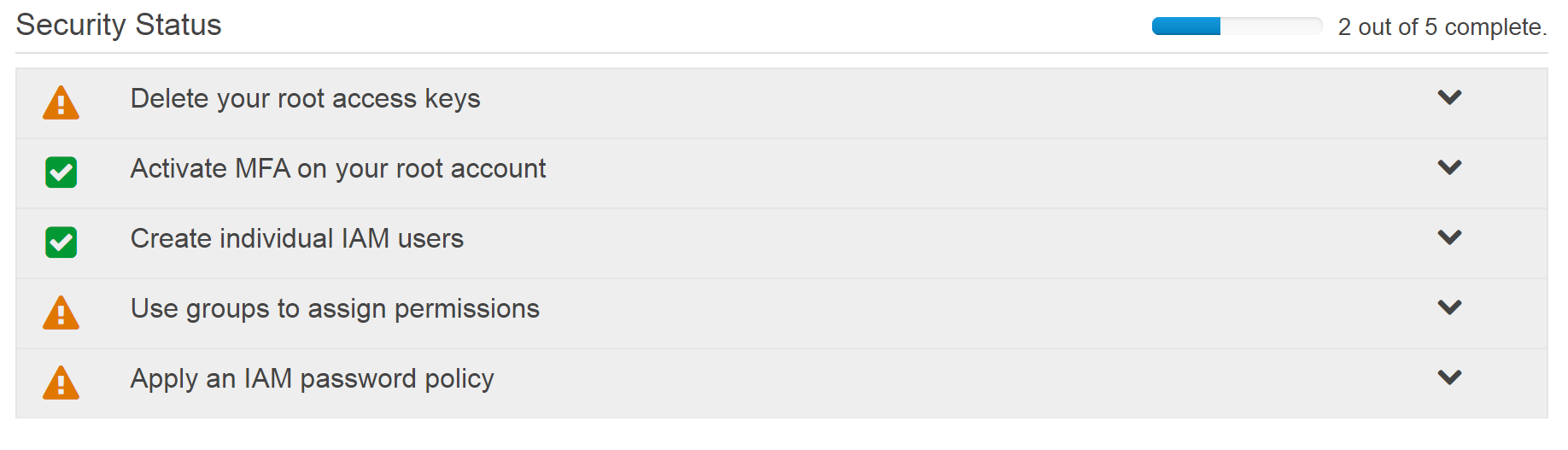

Similarly, if you look at https://console.aws.amazon.com/iam/ in your account, you’ll see a “Security Status” section that includes green checks for IAM best practices that have been completed and warning triangles for issues that still need to be done.

In comparison with the alternatives, some aspects of AWS Trusted Advisor make it feel more like a box ticking solution from Amazon that they created just to say they had it. It is also odd that they don’t simply provide all the checks for free, as they cost Amazon nothing to check for, other than the Github scanning check, which none of the other tools offer. That said, it provides at least some checks for free and is built into your account with no work for you to do, so you should review it periodically.

AWS Config

Amazon introduced AWS Config in November, 2014 (link). AWS Config can be thought of as two things:

- A recording of what is in your account throughout time.

- A rules engine that can evaluate these items and generate alerts via SNS.

AWS Config records information about all the “Configuration Items” (ex. EC2’s, S3 buckets, Security Groups, etc.) in your account throughout time and stores this information in an S3 bucket. AWS Config is sort of a hybrid between CloudTrail logs and making a bunch of AWS API calls to find out more information about resources. It also provides some minimal searching through them, for example to get info about a specific EC2 instance, you can query:

aws configservice get-resource-config-history --resource-type "AWS::EC2::Instance" --resource-id "i-05bef8a081f307783"

You can then apply queries such as --earlier-time to request how that EC2 instance was configured at some prior date. This aspect of AWS Config is similar to Netflix’s Edda. Edda collects information about your AWS environment and stores it in MongoDB, and then provides a REST API to allow the data there to be queried. Edda provides more useful querying functionality than AWS Config, but AWS Config can be setup in a matter of clicks and once you have the data, you could always grep through the S3 bucket for the info you want, which might be easier to decipher than Cloudtrail logs to understand how your environment looked at some point in the past.

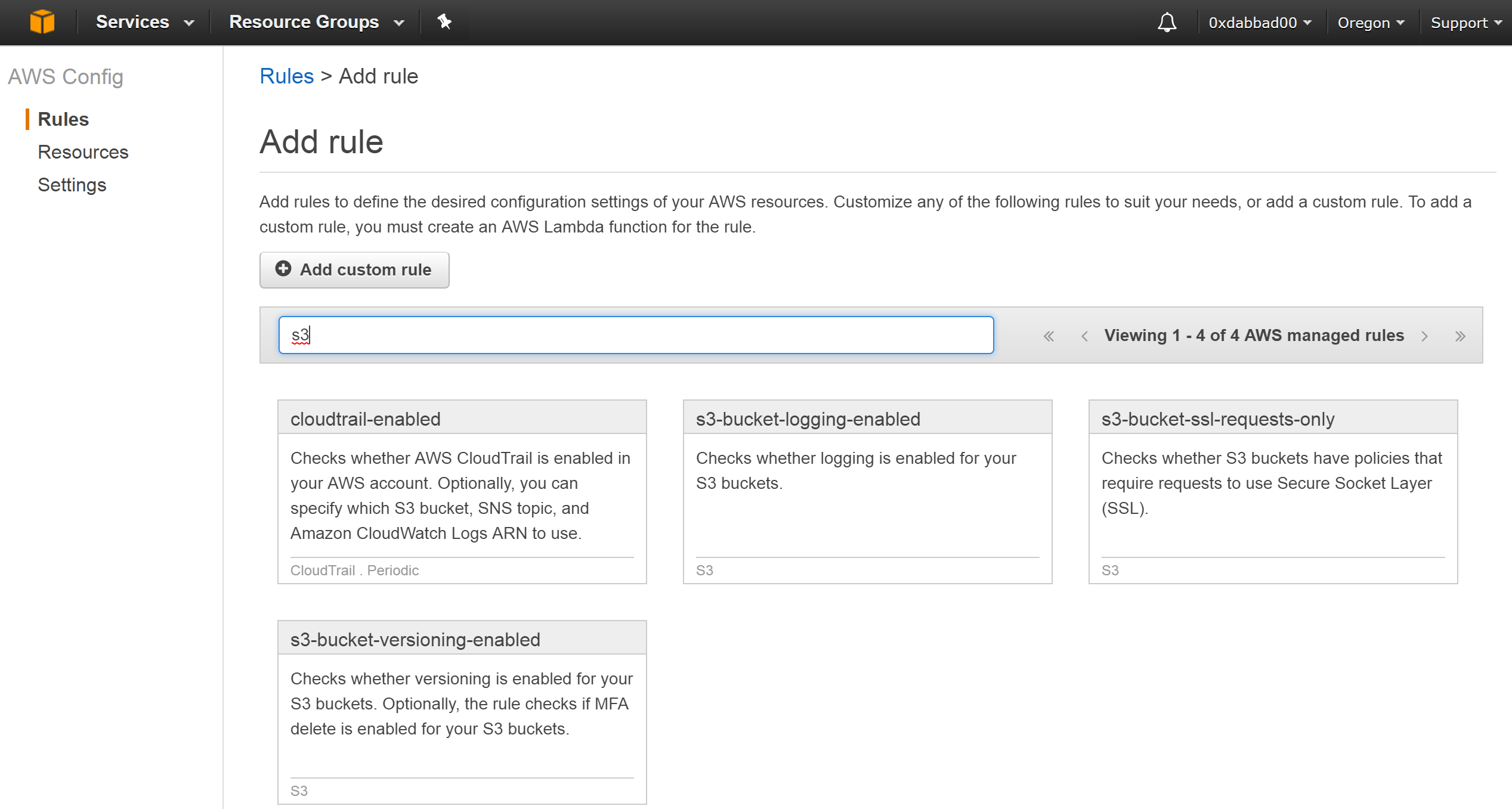

Storing this information costs $0.003 per resource recorded, but where the bigger expense are for the rules which are $2/rule/month. Rules are evaluated either when changes are detected, or you can set them to be tested periodically whether or not any changes are made.

There are currently 32 pre-existing rules from Amazon you can use, and you can create your own. You can view the community created rules (most of which are duplicates of the one’s provided by Amazon) here. Adding Amazon’s rules is easy as shown below:

When issues are detected it will fire an SNS notification.

Scout2

In 2012, Loïc Simon at iSEC Partners (now part of NCC Group) released a tool called Scout for auditing AWS environments. In 2014, they released a new version named Scout2. The open-source Scout2 project is focused toward pentesters doing one-time audits.

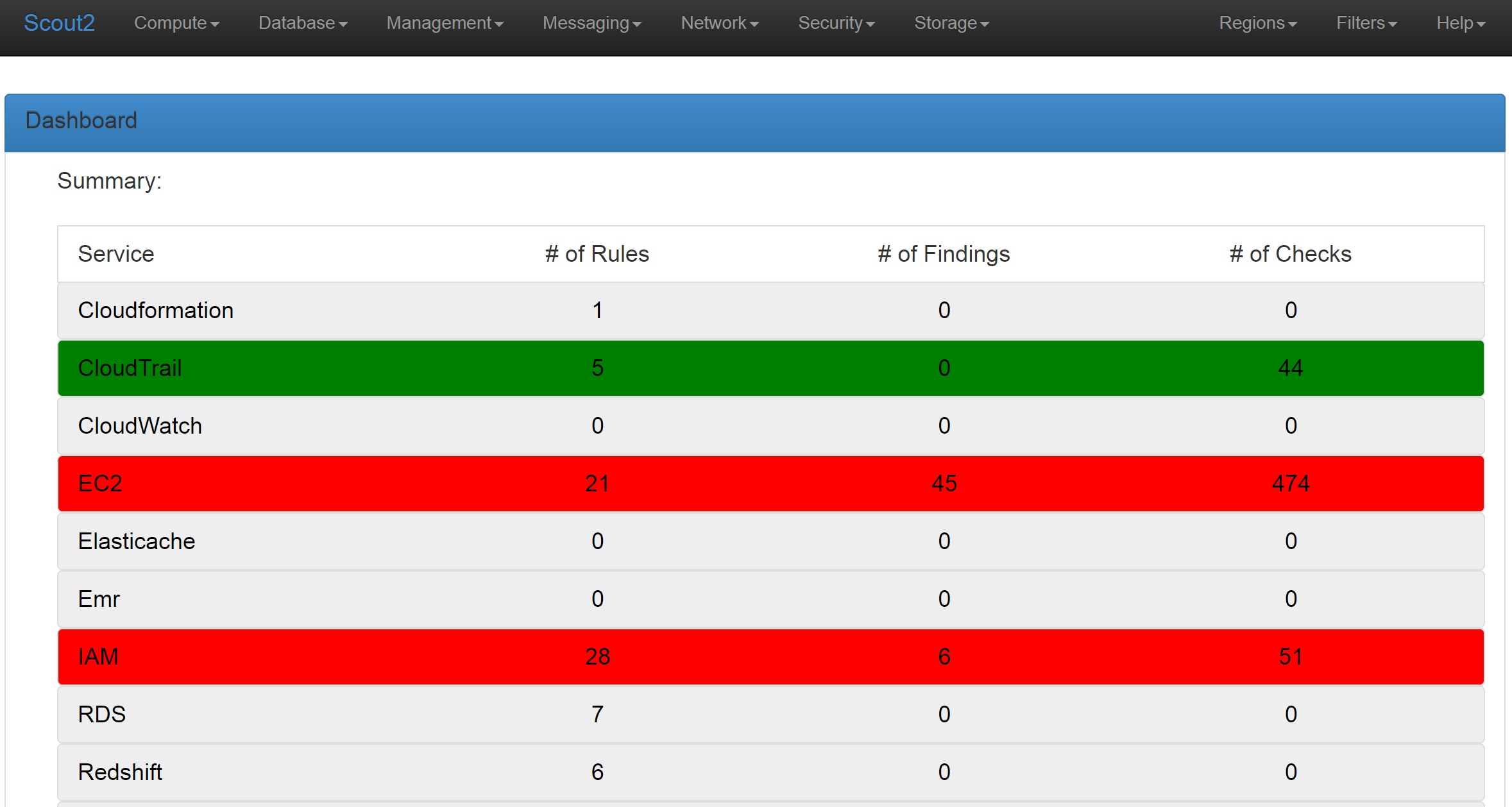

When you run the Scout2 command, it generates a static web directory, allowing you to open the created report.html file in your browser. Scout2 is a little buggy, so I’ve found I need to reload the pages sometimes when clicking on things didn’t work, but it gives a nice UI.

Here is the main screen.

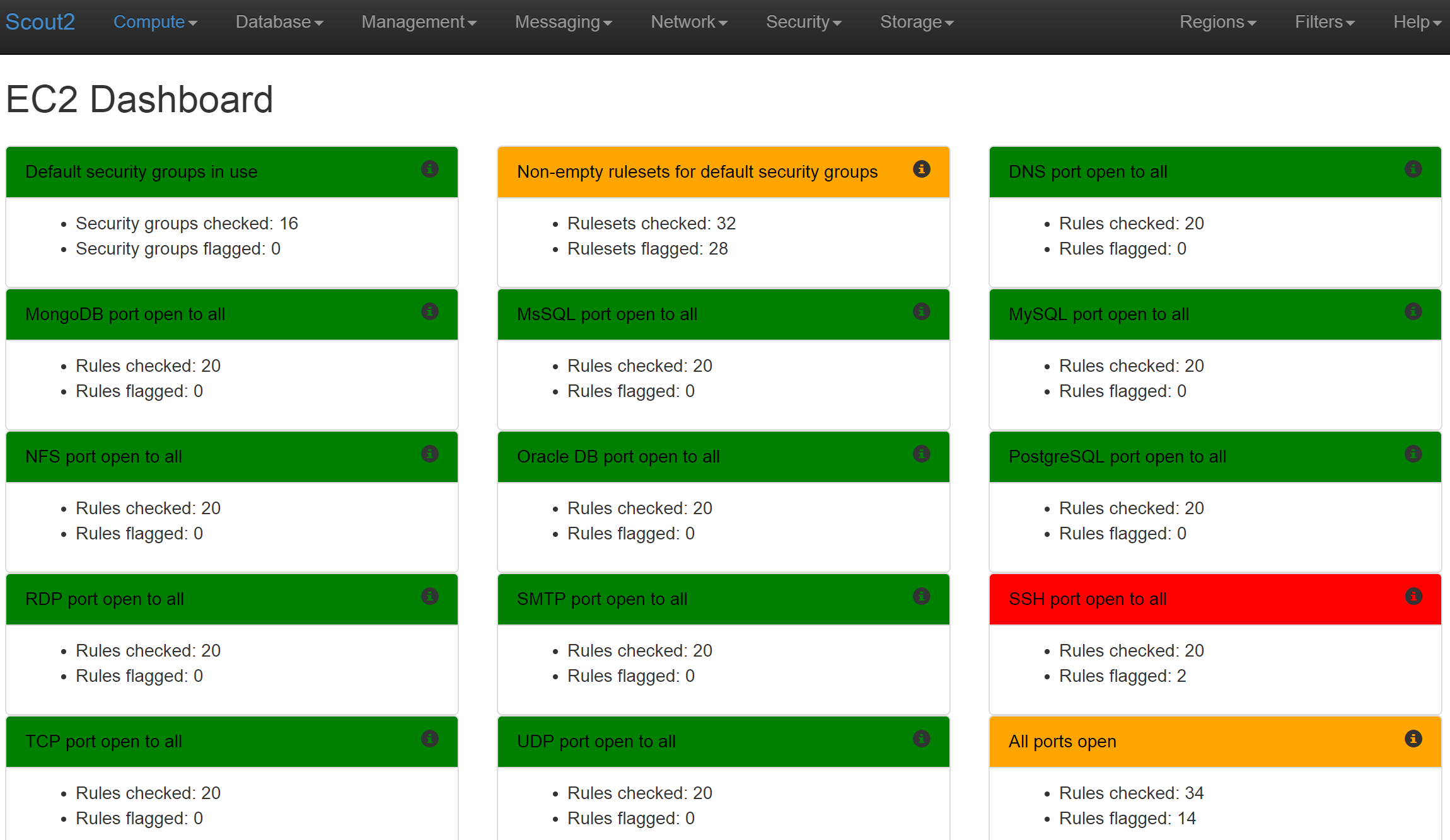

Clicking over to the EC2 Dashboard gives us a summary of all the issues for that service.

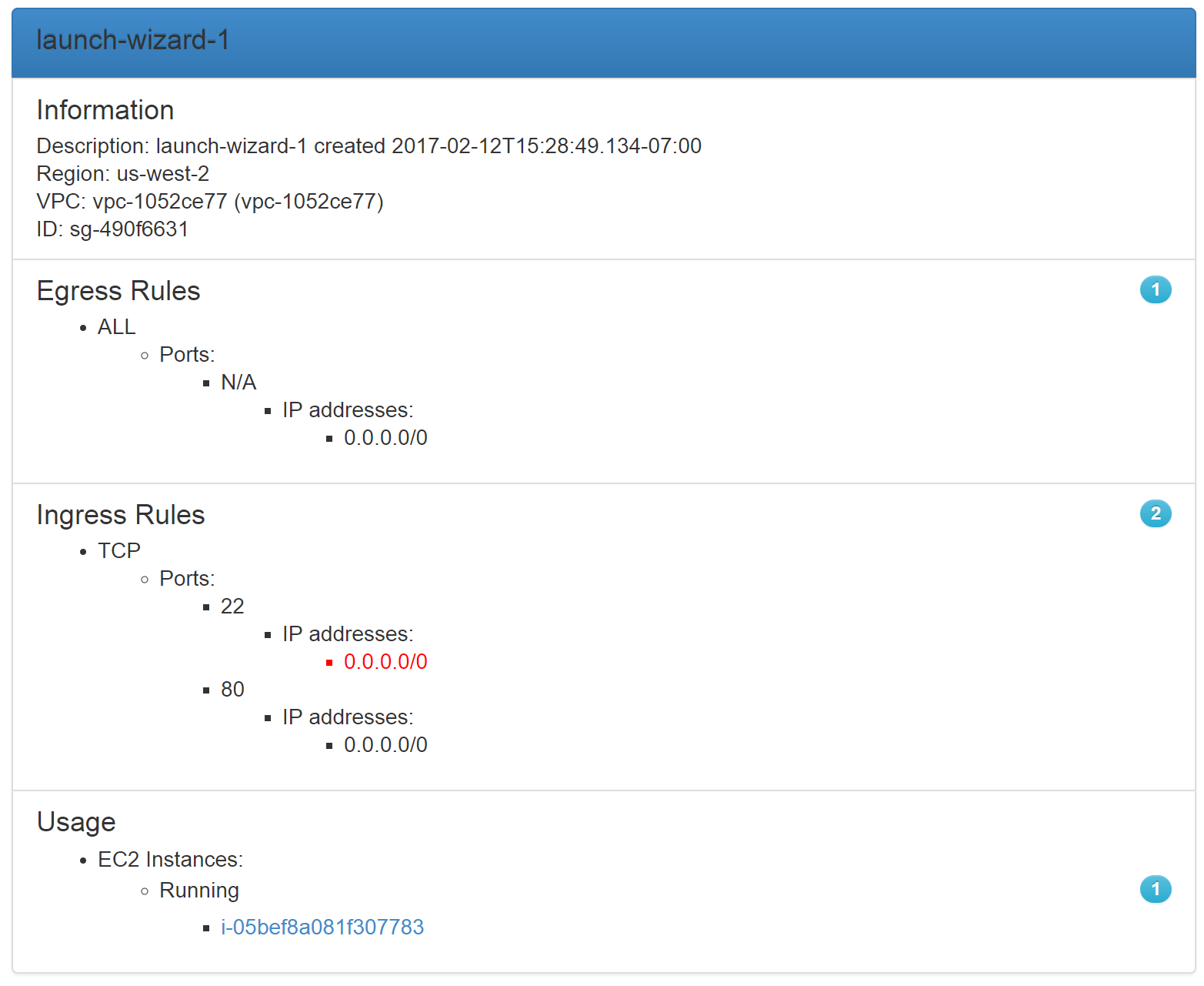

Looking at the “SSH port open to all” in red, we can see all the security groups open to 0.0.0.0/0.

It also shows what instances that Security Group is applied to.

Prowler

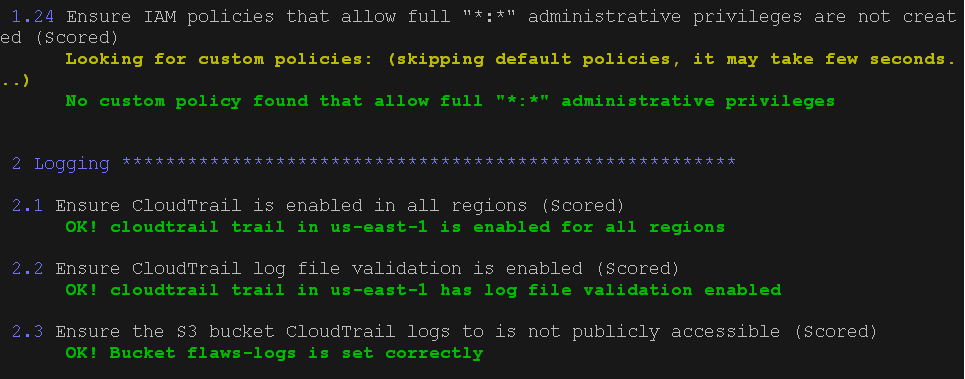

Toni de la Fuente (@ToniBlyx) at Alfresco released prowler in September, 2016 which was made to check the items from the CIS Amazon Web Services Foundations Benchmark.

This tool is solely focused on the issues from that report, and is the only tool that generates a single report (albeit console based) to read through as opposed to an application you need to know how to click through and use.

Security Monkey

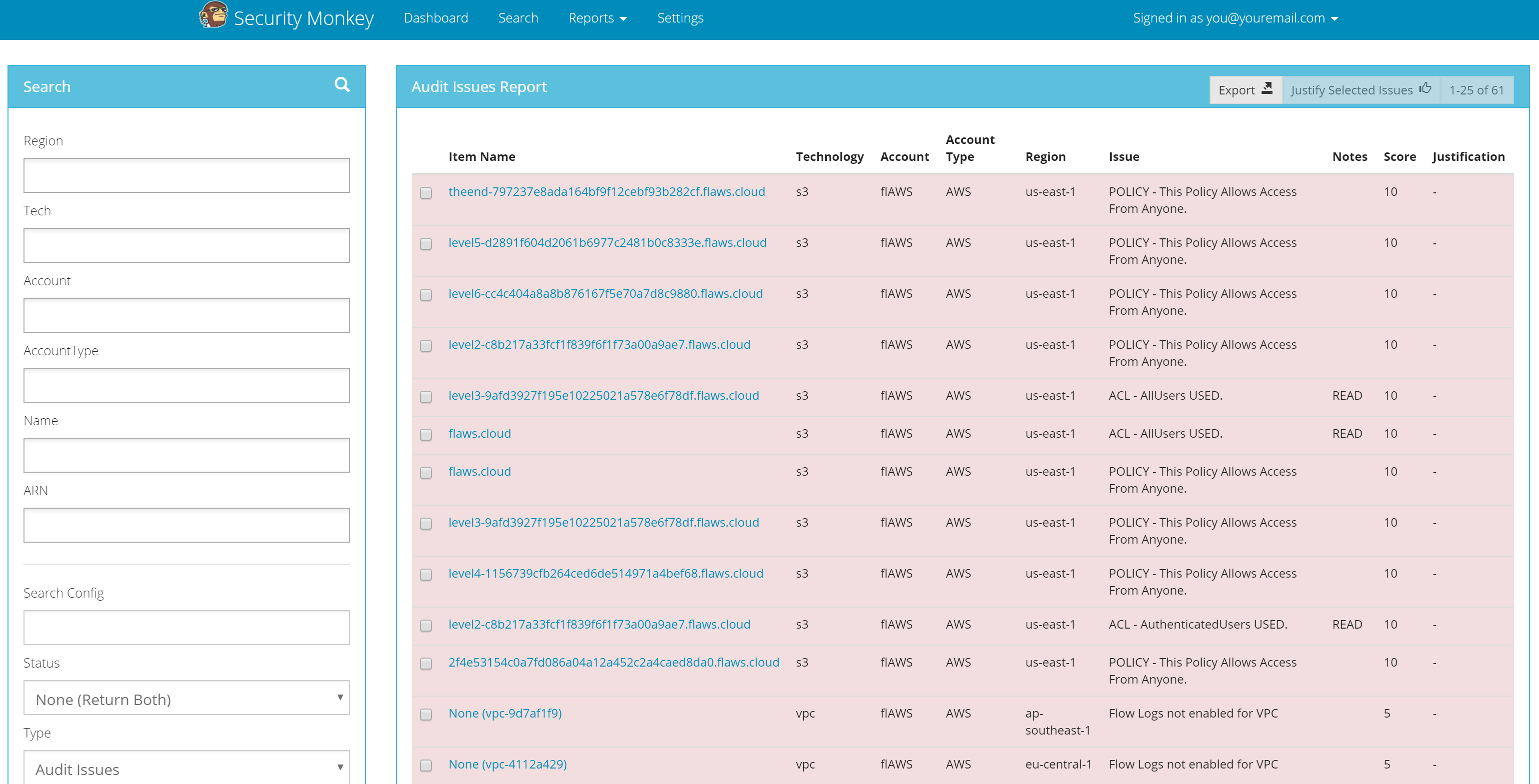

Netflix’s Security Monkey was released back in 2014 and detects issues both for AWS and Google Cloud Platform (GCP). Security Monkey is expected to be deployed as an entire EC2 and needs a PostgreSQL backend. It can repeatedly scan multiple accounts and generate alerts. Once alerted, security teams can browse a list of issues in its UI to review and justify or remediate.

Here is the main screen showing issues found.

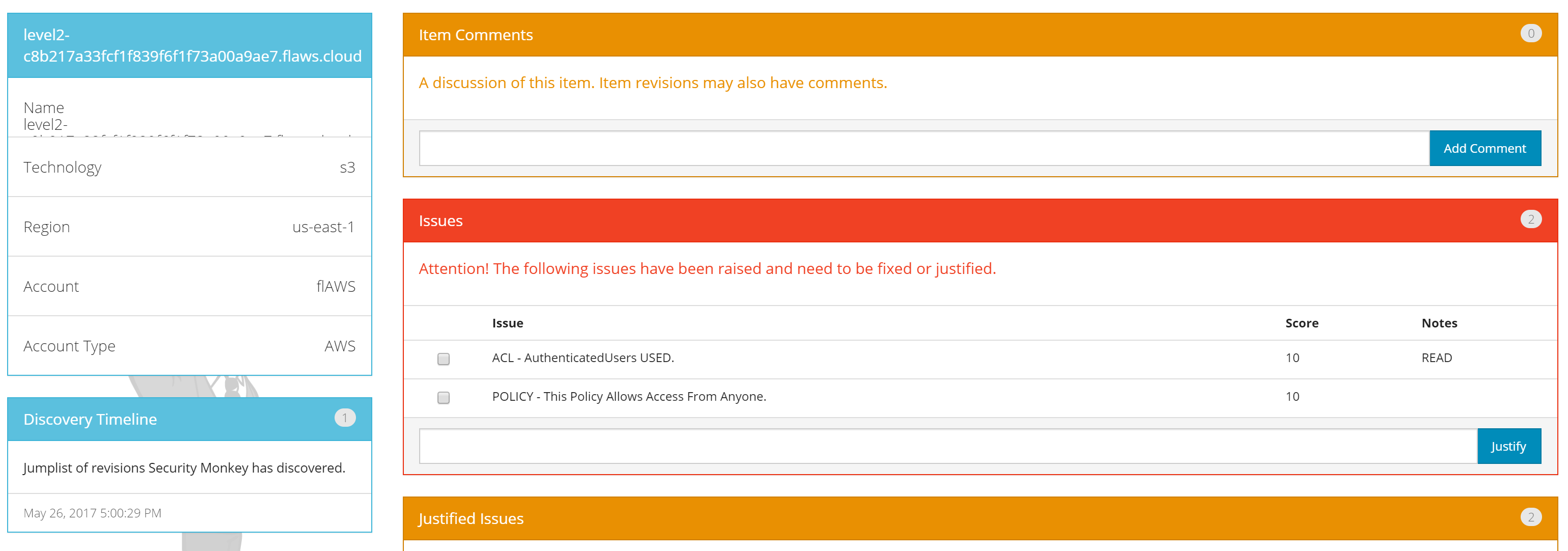

This image shows a selected issue and the ability to justify it. Notice that multiple issues for a single resource are grouped here, allowing you to justify all or some of the issues for that resource.

This image shows the details for that resource to help you better understand what is being identified.

You can see the code for Security Monkey’s checks here.

Cloud Custodian

CapitalOne’s Cloud Custodian was introduced in May, 2016. It doesn’t just detect issues like the other tools, but actually enforces compliance with an organization’s rules. It does this via the heavy handed method of in some cases, simply killing anything that isn’t in compliance. In it’s sample policy it includes the following:

- If it finds any EC2 instances that do not have encrypted EBS volumes, it simply terminates those instances.

- If any EC2 instances are not tagged with an Environment, OwnerContact, or some other tags, it stops those instances in 4 days.

- If an ELB does not use a white-listed SSL policy, it deletes that ELB.

The expectation behind Cloud Custodian is that you will run it regularly via a scheduled task. In order to accomplish its policies, Cloud Custodian will need far more than just the Security Audit permissions, as it needs to take actions such as stopping EC2’s. The project does not have many example rules, as it is expected you’ll write your own policies. It is also focused on reducing costs by ensuring some instances are stopped overnight.

Others

reddalert

Prezi’s reddalert was released in 2014 and uses Netflix’s Edda (discussed earlier in the AWS Config section), as opposed to the AWS API directly like the other tools. reddalert no longer appears to be maintained.

CloudSploit

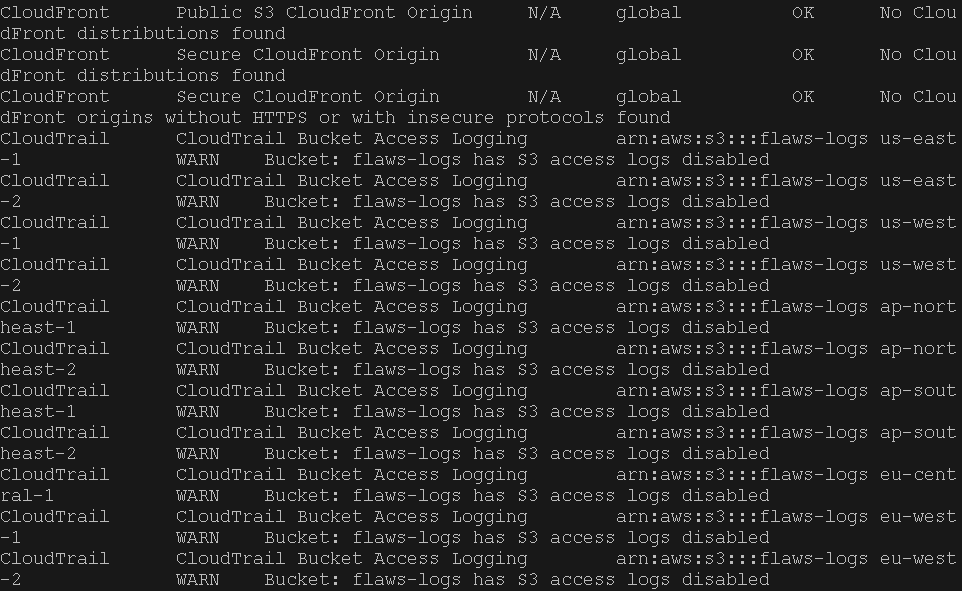

CloudSploit is a paid service, but it has two free options. One allows you to use their website to run a manual scan, and the other is they’ve open-sourced their engine and its rules so you can run it yourself, under their project github.com/cloudsploit/scans. You’ll want to search the output for any lines that don’t include the text “OK”.

Conclusion

The tools mentioned check for AWS best practices. They will not detect all possible problems that could exist in your AWS account (ex. you might be running old unpatched software in an EC2), and they will flag “issues” that you don’t really need to worry about (ex. an S3 bucket that is world-readable, but that is hosting public content for your website). You will want to tweak these tools to add and possibly remove checks that are unique to your environment. Some things you want to check for must also be done manually, as there are no API calls for them, for example checking that there is a security contact for the account.

There currently is not a single tool that includes all of the checks from the other tools, and none of the tools do much for explaining why you should do what the check is looking for, or how to do it. I plan to improve that.

My general advice is first to look at the AWS Trusted Advisor for your account. Do that right now if you’ve never done it, as it’s a built-in service to easily point out security issues quickly to you, along with potential cost savings, performance, and fault tolerance issues.

Scout2 and prowler are easy to get running quickly, but they are more geared towards auditors doing a one time check. Security Monkey is my recommendation for security teams wanting to monitor their environments, but it takes a bit more work to install and configure. If you’d prefer to use an AWS service, you could use AWS Config instead. If you want to not only be alerted of issues, but actually force things into compliance, you’ll want to use Cloud Custodian.