The main AWS conference of the year, re:Invent, happened recently in Las Vegas. AWS releases many things in the days leading up to it, so this post will be a summary of some of the main AWS security announcements over the past two months. I’ve written similar reviews for re:Invent in 2018 and 2017.

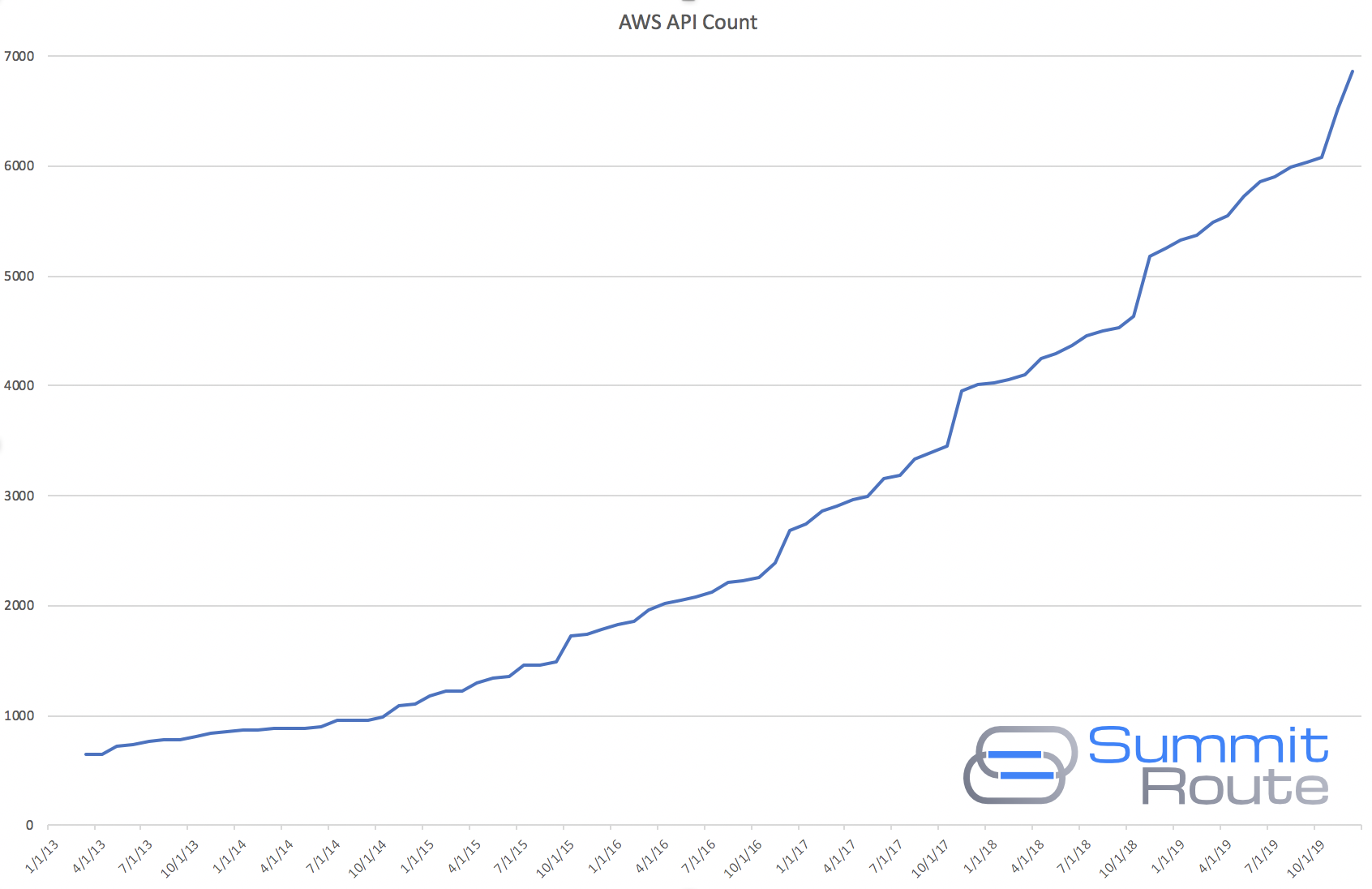

To show how much new stuff is released by AWS, the following chart shows the growth of AWS API calls over the past 7 years, where you’ll notice sudden jumps around November for when re:Invent happens.

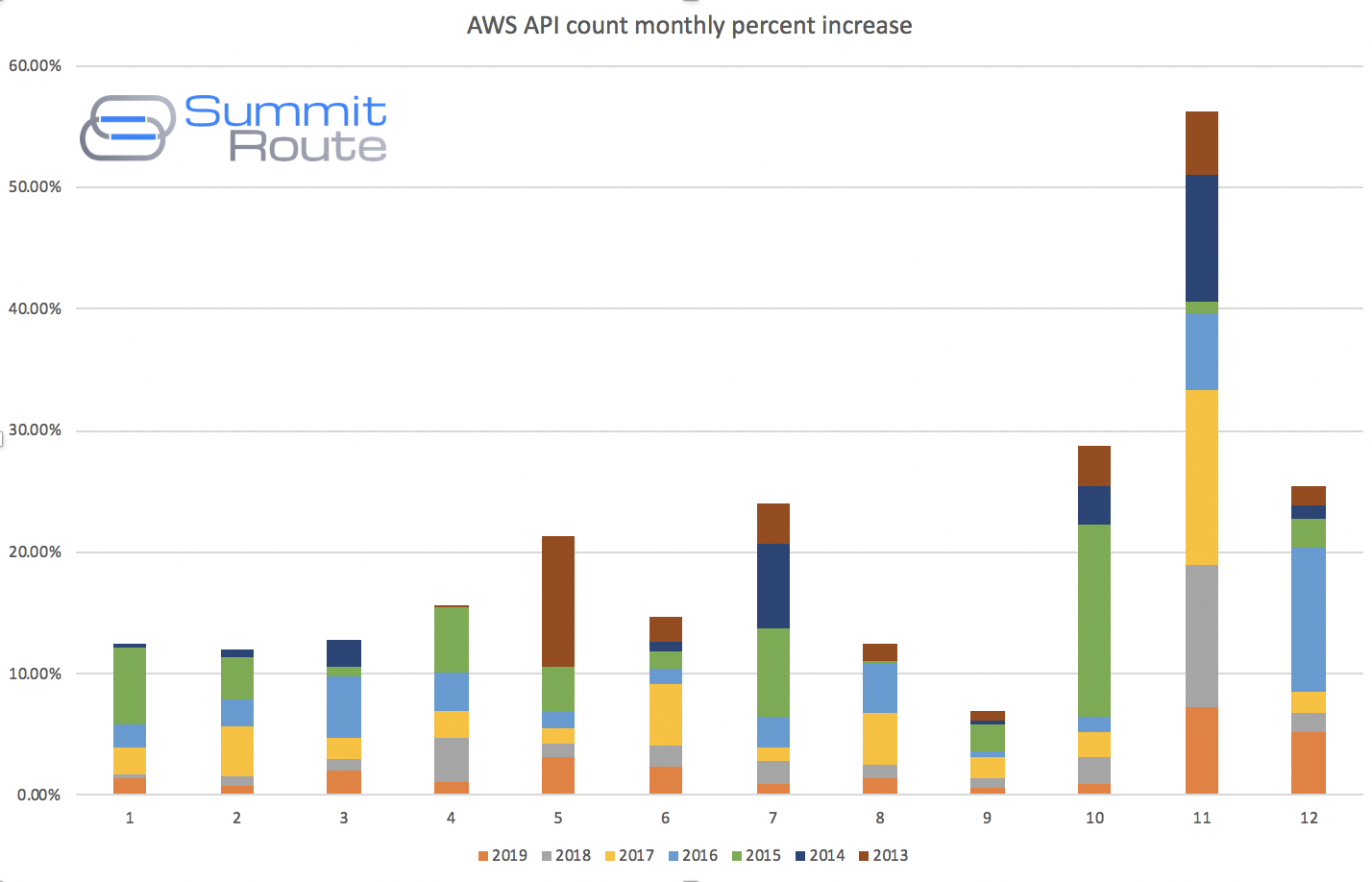

This next chart shows the percent increase by month as a stacked bar chart, where the colors represent the different years. I’ve included this to show crazy re:Invent is in comparison to the rest of the year. If your company is looking for help keeping on top of all these changes, I provide a 2-day AWS security training class. Reach out to me for more info!

Access Analyzer and CloudTrail Insights

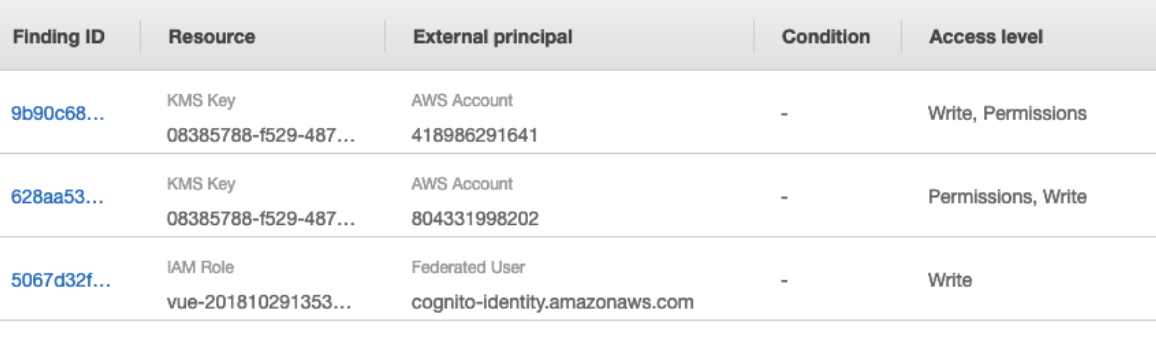

Access Analyzer (video) identifies if various resources are publicly accessible or accessible from other AWS accounts. It currently works with S3 buckets, KMS keys, SQS queues, IAM roles, and Lambda functions. This functionality has existed in many other tools for years now, including one line of bash in Prowler, but the benefit of this is that it is free and built into AWS so it becomes more of AWS’s responsibility to be able to figure out when these things are public. There are situations where this can tougher to figure out, such as identifying the confused deputy problems of Lambdas.

There are already a handful of services on AWS that detect when an S3 bucket has been made public (ex. Trusted Advisor, Macie, S3 itself, and AWS Config Rules), but it looks like this will be where AWS focuses their efforts on identifying public resources in the future. There are also still a lot of services that can be made public that AWS is not yet detecting with this service, such as Glacier (which by the way, AWS’s own documentation used to advise customers make public, so that’s a common issue).

CloudTrail Insights advertises itself as being able to identify spikes in resource provisioning, bursts of IAM actions, or gaps in periodic maintenance activity. It doesn’t make any sense that this wasn’t built into GuardDuty, as it will likely detect many of the same events, and it costs roughly the same as that service. I don’t think there is enough benefit in turning this on. This seems like someone on the CloudTrail team found out that releasing a new feature would get them a promotion, instead of being incentivized to interact with the GuardDuty team, or focusing on something more useful, like documenting what actions are actually recorded by CloudTrail. A better solution anyway would be to use the newly released CloudTrail Application Anomaly Detection (video) by Will Bengston and Travis McPeak.

Both Access Analyzer and CloudTrail Insights are yet another set of disparate security features to turn on. Access Analyzer you’ll have to setup CloudWatch Events aggregation for (I describe one method here). CloudTrail insights records its alerts to S3, which makes aggregation easier, but that is delayed by 30 minutes. A month ago GuardDuty also announced that you could aggregate alerts from multiple regions by using S3, which incurs a 5 minute delay for them. AWS has a dozen queue and message bus services for real-time monitoring and yet S3 appears to be their favorite service which inherently results in delays.

Amazon Detective

Amazon Detective is a service to help you investigate security incidents. It is still in private beta, so most of the public information is in this video. Although it uses a graph database and the technology from the Sqrrl acquisition, this appears to only show you histograms of API request volume and GeoIP maps, which you could build yourself in ELK/Splunk/Quicksight.

Having these views pre-built and maintained for you is the main benefit, but in considering the security investigation solutions offered by the 3 main cloud providers, Azure’s Sentinel is by far the strongest offering, and the Azure team even did a blog post on how to analyze an AWS incident using Sentinel, which is a kick in the teeth to AWS that Azure can investigate security incidents on AWS more effectively than AWS’s solution can.

S3 Access Points

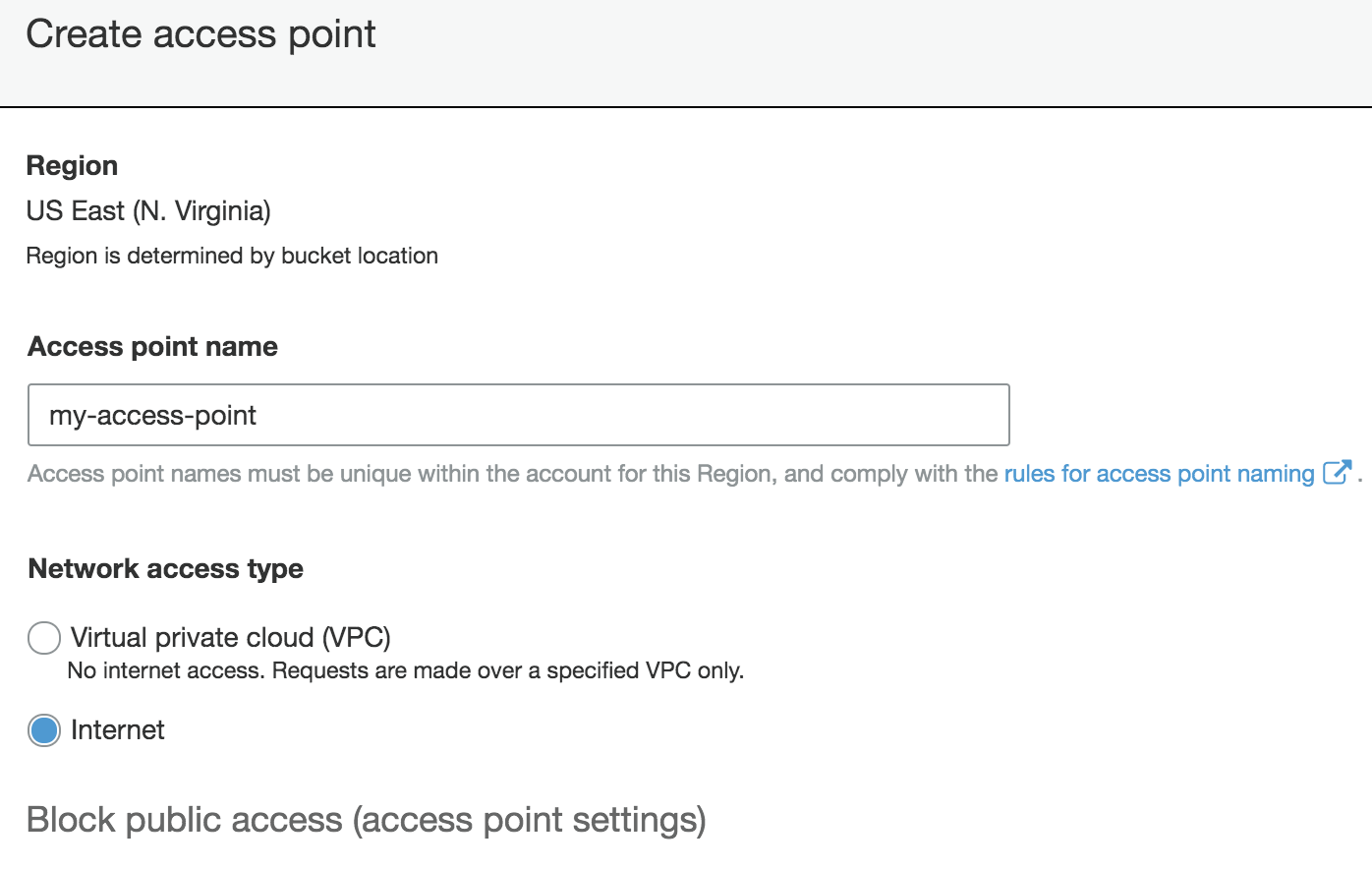

S3 Access Points can be thought of as a proxy to an S3 bucket. In some ways, these appear to be a more featureful version of VPC Endpoints, and are free. However, these can be made public, meaning this can allow public access to an otherwise private S3 bucket. Edit 2020-01-02 These can be made publicly accessible, but only if the S3 bucket is already public.

These can be restricted to a VPC, and with a policy that only allows certain actions, from certain principals, to come through. As these are new, it’d be good to get a head start on possible future problems, by creating an SCP to deny Internet accessible Access Points, via the SCP listed here under “Example Service Control Policy”.

Tag Policies

For a while now we’ve had SCPs that allowed you to restrict, at the AWS Organization level, what actions are allowed across all the AWS accounts at a company. With Tag Policies you can get insight into the current tags on resources and identify non-compliant ones. This is really just a way to generate reports. Once you’ve identified that all resources are compliance, you can then use SCPs to enforce those policies. To many, this is the most important compliance related announcement from re:Invent.

Session tags

AWS continues to lead customers toward using Attribute-Based Access Control (ABAC) where you use resource tags and now session tags, along with IAM policies, as a way of defining who can access what. This allows for some new ways of doing IAM on AWS, specifically when using an identity provider, but beware that no one has yet created any tools to help you understand or audit an ABAC strategy. This video helps explain this feature and the ABAC concept.

Other

- KMS asymmetric keys: You can now use public/private key pairs with KMS to ensure the private key never leaves the KMS, for RSA and some other key types.

- UltraWarm ElasticSearch: For those using an ELK stack, this provides tiered storage for ElasticSearch, so that less frequently accessed data can stored less expensively (up to 90% cost reduction, according to their blog post).

- Nitro Enclaves provide a constrained environment for you to run custom code. This is described some in this video.

- Amazon Fraud Detector is a managed service for detecting online payment fraud and the creation of fake accounts.

- Amazon CodeGuru: This is a code linter-as-a-service, except hopefully with smarter checks. It is definitely more expensive, at a cost of $0.75 per 100 lines of code. To put that in perspective, it would cost $9M to scan the Linux kernel, except it wouldn’t report anything because this only works with Java. This can be integrated with Github, so people have already joked about submitting large pull requests to projects that use it to make them waste money. It also has a profiler in it, for finding what code takes the most time, so you can optimize it, and save yourself money, which of course you’ll need to do after spending so much on CodeGuru. At the current price point, and especially if you’re not a Java shop, this service can largely be ignored for now.

Conclusion

You should turn on Access Analyzer in all accounts and all regions and collect their events. It is free, and only alerts you to potential problems, so it has no negative impact. You should also start using Tag Policies to understand where you might have work to do on a company wide tagging strategy. The other announcements you can consider on a case-by-case basis.